The Cancer That Has No Name — And the AI That Finally Answers It

Imagine sitting in a hospital room and hearing your oncologist say: “We know you have cancer. We can see it has spread. But we don’t know where it started — and we may never find out.”

This is the reality for roughly three to five percent of all cancer patients worldwide. Their condition has a clinical name — Cancer of Unknown Primary, or CUP — but that name is really just a formal way of saying: medicine is stumped. The tumor has already disseminated through the body. Metastases appear in the lungs, the liver, the lining of the abdomen. But the original cell — the one that first turned malignant and set everything in motion — has vanished into the noise.

For these patients, the stakes couldn’t be higher. Modern cancer treatment is almost entirely site-specific. Immunotherapy that works brilliantly for lung cancer does nothing for ovarian cancer. A targeted therapy designed for colorectal cancer won’t touch breast cancer. Without knowing where the cancer came from, oncologists are flying blind — forced to guess, or to fall back on broad-spectrum chemotherapy that often buys only weeks.

The median survival for an unfavorable CUP case: eight months. Fewer than one in five patients survives beyond a year.

Now, a new generation of AI is learning to see what human pathologists cannot.

The Detective Work of Pathology

To understand why CUP is so hard to solve, you need to appreciate what a pathologist actually does — and how limited that work has been until very recently.

When cancer spreads to a new organ, the tumor cells there often carry visual fingerprints of their origin. An ovarian cancer cell that has colonized the lung still looks different from a primary lung cancer cell — slightly different shape, different nuclear structure, different way it clusters with neighboring cells. A trained pathologist, staring through a microscope at cells stained with hematoxylin and eosin, can sometimes detect these differences and make an educated guess.

But the key word is sometimes. Even the most experienced pathologists correctly identify the tissue of origin from cytological images — thin smears of fluid drawn from around the lungs or abdomen — only about 60 to 70 percent of the time. Junior pathologists do significantly worse. And crucially, there is enormous variation between examiners: studies show that when four pathologists independently analyze the same set of images, they reach identical conclusions in fewer than one in four cases.

Immunohistochemistry — staining cells with antibodies that react to specific proteins — helped. But it could only identify the primary tumor in fewer than 30 percent of CUP cases even when using a panel of 20 different stains. Next-generation genomic sequencing was more powerful, but expensive, slow, and practically inaccessible in much of the world.

The problem, at its core, is one of pattern recognition at enormous scale and nuance. The visual information embedded in a cytology smear is vast. The biological differences between, say, pancreatic cancer cells and lung cancer cells, when seen through fluid rather than tissue biopsy, are subtle — a matter of textures and arrangements invisible to the untrained eye, and inconsistently detected even by the trained one.

This is exactly the kind of problem where biological data meets computational intelligence. This is the biocomputer moment.

TORCH: Teaching a Machine to Read Life’s Fingerprints

In April 2024, researchers from four major Chinese hospitals published results in Nature Medicine that represent one of the most clinically meaningful demonstrations of medical AI to date.

Their system is called TORCH — Tumor Origin differentiation using Cytological Histology. And where human pathologists see uncertain shadows, TORCH sees signal.

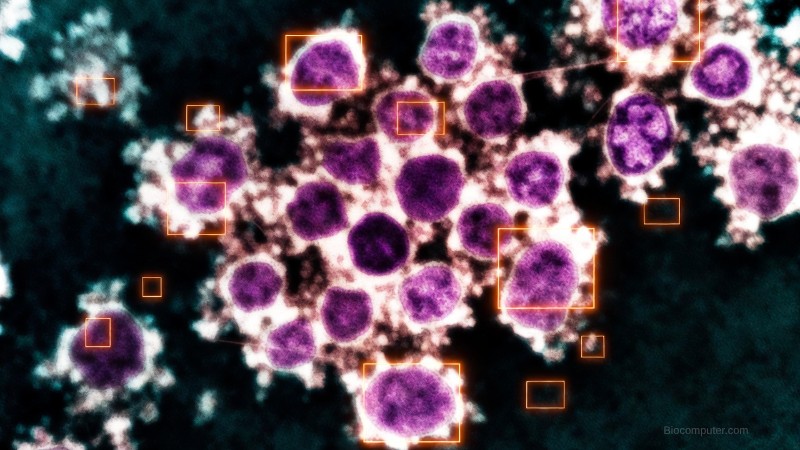

The training data alone is staggering: 57,220 cytological smear images drawn from 43,688 patients across 13 years of clinical records. These weren’t curated research samples — they were real-world images from real fluid samples, including the blurry ones, the under-stained ones, the diagnostically ambiguous ones that cause pathologists to pause and reach for the phone.

TORCH was designed for a specific and critical scenario: the patient who arrives with fluid in their chest or abdomen, cells visible in that fluid, and no known primary tumor. It takes the cytological image, combines it with three simple clinical variables — patient age, sex, and sample site (chest vs. abdomen) — and produces a ranked prediction of which organ system most likely gave rise to the cancer.

The results, validated across five independent testing sets totaling more than 27,000 cases, are striking.

TORCH correctly identified the primary organ system in 82.6 percent of cases on its first guess. More importantly for clinical practice, its top-three prediction contained the correct answer 98.9 percent of the time — meaning an oncologist given TORCH’s shortlist could almost certainly find the right answer among the candidates.

When compared directly against four practicing pathologists analyzing the same 495-case test set, TORCH outperformed all of them — both the junior physicians (accuracy around 43 percent) and the senior specialists (accuracy around 63 percent). Its diagnostic scoring was statistically and significantly higher than every human examiner.

But the number that matters most isn’t about accuracy. It’s about survival.

27 Months vs. 17 Months

The researchers tracked 391 patients with CUP whose treatment plans were reviewed against what TORCH had predicted. In some cases, the chosen treatment aligned with TORCH’s prediction — the same organ system the AI suspected, the same drug protocol that would follow from that suspicion. In other cases, the treatment diverged.

The results of that natural experiment were stark.

Patients treated in alignment with TORCH’s predictions survived a median of 27 months. Those treated with discordant protocols — drugs aimed at the wrong cancer origin — survived a median of 17 months.

Ten additional months. Not in a clinical trial, not in a carefully selected favorable-prognosis subgroup. In real patients with one of oncology’s most intractable diagnoses.

This is the difference between computational intelligence and biological intuition. And it is, when you sit with it, a profound thing.

What TORCH Is Actually Seeing

How does a neural network learn to identify pancreatic cancer in a fluid sample from the lung lining?

The honest answer is: not entirely in the way a pathologist would. TORCH doesn’t follow a checklist of known morphological features. Instead, through exposure to tens of thousands of labeled images, it learns spatial and textural patterns that correlate with specific origins — patterns that may not even have names in pathological vocabulary.

The researchers used attention heatmaps to visualize which image regions the model prioritized in its predictions. Human pathologists then assessed those highlighted regions and found them diagnostically relevant 87.7 percent of the time. TORCH was, in most cases, looking at the right things. It had discovered meaningful features — organizational structures like glandular arrangements, papillary clusters, chromatin density — but potentially also subtler features that defy easy description.

This is the nature of deep learning applied to biology: it finds patterns in dimensions humans haven’t thought to measure. The biocomputer doesn’t just replicate human knowledge. It extends it.

The Junior Pathologist Effect

Perhaps the most practically important finding from the TORCH study isn’t about the AI’s standalone performance. It’s about what happens when junior pathologists use it as a tool.

When two junior pathologists worked without assistance, their top-1 accuracy was around 43 percent. When given TORCH’s predictions as a reference, their accuracy jumped to 62 percent — statistically indistinguishable from senior pathologists working alone.

This has enormous implications for global health equity. Cytopathology expertise is deeply unevenly distributed. Major cancer centers in wealthy countries have senior specialists who spend careers developing diagnostic intuition. Rural hospitals in low- and middle-income countries often have none. TORCH doesn’t eliminate the need for pathologists — it amplifies their capabilities, potentially bringing the diagnostic quality of an elite academic medical center into every hospital equipped with a microscope and an internet connection.

The Bigger Wave: AI Pathology in 2026

TORCH is not an isolated breakthrough. It’s one visible peak in a rising wave.

At Harvard’s Brigham and Women’s Hospital, the TOAD system — trained on more than 22,000 whole-slide images — can predict cancer origin across 18 different tissue types with 83 percent accuracy, and includes the correct diagnosis in its top-three predictions 96 percent of the time.

Paige Prostate, the first FDA-cleared AI pathology tool, reduces false negatives in prostate cancer detection by 7.3 percent. The FDA has since granted Breakthrough Device Designation to Paige’s PanCancer Detect — an AI intended to support cancer detection across multiple anatomical sites simultaneously.

PathChat, developed at Harvard, goes further still: it can not only analyze a histopathological slide but conduct a multi-turn diagnostic conversation with a clinician, suggesting immunostains, proposing differential diagnoses, and reasoning through clinical context interactively. It too has received FDA Breakthrough Device Designation.

At Memorial Sloan Kettering, a model called AEON is reclassifying tumors previously labeled “not otherwise specified” into precise subtypes — including retroactively identifying the primary tumor type in cancers that were originally diagnosed as CUP. In most of those reclassified cases, patient survival aligned with what would have been expected for the newly identified cancer type. The data had been there all along. Human eyes had simply missed it.

Underlying all of this is a fundamental insight: histological slides are not static pictures. They are dense biological data. Every tissue section is a snapshot of millions of cells, each encoding the computational history of its lineage — the mutations it accumulated, the signals it responded to, the microenvironment it shaped. An AI trained on enough of these snapshots begins to read that history.

This is the biocomputer perspective applied to pathology: biological tissue as information, and AI as the reader that finally does it justice.

The Horizon: Multimodal AI and the End of CUP?

A January 2026 review in Cancer Biology & Medicine articulates the most ambitious vision: that CUP, as a category, may not survive the next decade.

The argument is this: CUP exists because medicine has relied on anatomy and visual morphology to classify cancer. Tumor taxonomy is organized around where a cancer starts. But AI systems that can integrate genomic data, RNA expression profiles, histological images, liquid biopsy signals, and clinical metadata into a unified prediction may soon make the “unknown primary” label obsolete. Not because we’ll always find the original tumor — but because it won’t matter anymore.

If an AI can tell you, with high confidence, that a patient’s cancer behaves like ovarian cancer regardless of where the metastases appear, you can treat it like ovarian cancer. The anatomical origin becomes secondary to the biological identity — what genes it expresses, what pathways drive its growth, what therapies its molecular profile suggests.

This represents a paradigm shift in oncology: from anatomy-based classification to computation-based classification. From where did this start? to what is this?

TORCH is a step in that direction. But it’s a step. The full journey will require AI systems that fuse pathological imaging with the complete molecular portrait of a tumor — a biocomputer diagnostic engine that sees not just the cells, but the full computational logic running inside them.

Caveats and Honest Limits

None of this means the problem is solved. TORCH was developed on data from four hospitals in northern and eastern China. How it performs on patients from different ethnic backgrounds, different clinical environments, or different staining protocols remains to be validated. The model identifies origin at the organ-system level — digestive system, respiratory system, female reproductive system — not at the precise organ level that would ideally guide targeted therapy.

More importantly, TORCH has not yet been validated in a randomized controlled trial. The survival benefit observed in the study was retrospective and observational. Correlation is not causation, and the full proof will require prospective trials where treatment protocols are prospectively assigned based on AI prediction vs. standard of care.

These are real limitations. The researchers acknowledge them honestly.

But the direction of travel is unmistakable.

What Biology Has Always Known

There is something worth pausing on, in the deep story of CUP and AI pathology.

The body has always known where a cancer came from. Every cell carries its origin in its molecular identity — in its epigenetic marks, its gene expression signature, its protein profile, its morphology. The information was always there, in the tissue, encoded in the biology. Medicine simply lacked the tools to read it.

An AI system like TORCH doesn’t invent a new kind of knowledge. It reads knowledge that was already present — biological computation, billions of years in the making, finally interpreted by a machine trained to recognize its patterns.

That is the core premise of biocomputer thinking. Living systems are not merely like computers. They are computers — extraordinarily sophisticated, parallel, adaptive information processors operating in biological substrate. When we build AI systems capable of reading that substrate, we gain access to a layer of knowledge medicine has long been reaching for and only beginning to grasp.

For the patients sitting in that hospital room — the ones told that their cancer has no name — that grasp is coming. Not fast enough. Not perfectly. But it is coming.

And when it arrives, it will look a great deal like this: a deep neural network, trained on tens of thousands of images, quietly reading the cellular fingerprints that biology left behind — finding the origin in the pattern, giving the nameless cancer its name, and giving patients more time.

Sources: Liu et al., Nature Medicine, 2024; Lu et al., Nature, 2021; Li & Shen, Cancer Biology & Medicine, 2026; Marra et al., Annals of Oncology, 2025; American Cancer Society; Swedish Cancer Registry population data.