In March 2026, Hideaki Yamamoto’s team at Tohoku University and Future University Hakodate did something that should change how you think about machine learning. They wired cultured rat cortical neurons through a 26,400-electrode high-density microelectrode array, applied FORCE learning — the first-order reduced and controlled error algorithm — and watched living brain cells generate clean sine waves, triangular waves, square waves, and full Lorenz attractor trajectories on demand.

This is not a simulation. Not a metaphor. Rat neurons stopped firing random bursts and started executing temporal pattern generation tasks that trip up silicon systems at scale. The closed-loop feedback cycle ran at 332.5 ± 1.5 ms. The networks held their output autonomously after feedback stopped.

The thesis is simple and irreversible: living neural networks are programmable computational substrates. Wetware just crossed into supervised AI territory.

Modular Architecture Was the Unlock — Not Raw Neuron Count

The Tohoku team didn’t just dump neurons on a chip and hope. The key engineering move was PDMS microfluidic films — polydimethylsiloxane sheets with 128 square wells (100 × 100 µm² each) connected by ~5 µm microchannels. This modular geometry forced the network into structured compartments rather than a homogeneous soup.

The effect on dynamics was immediate and decisive. Pairwise correlations dropped from 0.45 in unstructured cultures to 0.11–0.12 in lattice and hierarchical configurations. Lower correlation means richer, more independent dynamics — exactly what reservoir computing needs to avoid collapsing into synchronized chaos.

Homogeneous cultures failed the learning tasks completely. Modular networks succeeded. Structure, not scale, was the variable that mattered.

The underlying chip — a 3.85 × 2.10 mm² CMOS array at 17.5 µm electrode pitch — gave the team enough resolution to record and stimulate at single-neuron granularity. Primary rat cortical neurons (E18) were cultured at 700 cells/mm², with a mean of 14.6 ± 3.8 neurons per well, and tested between 14 and 31 days in vitro.

FORCE Learning on Biological Tissue — The Numbers That Matter

FORCE learning was applied to a linear readout layer trained on filtered spike trains from the biological network. Feedback stimulation used biphasic pulses up to 876 mV to drive the network’s chaotic spontaneous activity toward specific target attractors.

What the system produced:

- Sine waves across periods from 4 to 30 seconds — nearly an order of magnitude in temporal range

- Triangular waves and square waves with consistent morphology

- Full Lorenz attractor trajectories — a chaotic three-dimensional system that requires the reservoir to maintain memory across multiple timescales simultaneously

The Lorenz result is the one that matters most. It’s not a periodic signal. It’s structured chaos — and the biological network reproduced it. That kind of temporal flexibility has not been demonstrated in living tissue before.

Post-training, networks autonomously sustained 30-second oscillations without ongoing feedback. The biological substrate had been shaped into an attractor — not just trained to respond, but structurally changed.

Why Biology Beats Silicon on This Class of Problem

Reservoir computing works by exploiting the rich, high-dimensional dynamics of a fixed physical system. You don’t train the reservoir itself — you train a simple readout on top of it. The reservoir’s job is to project inputs into a complex space where patterns become linearly separable.

Silicon reservoirs have to be engineered to produce that complexity. Biological neural networks generate it for free. Intrinsic bursting, dendritic integration, neuromodulatory drift — everything that makes neuroscience hard makes wetware reservoirs powerful.

This is the same insight Cortical Labs pursued with CL1 and that FinalSpark built the Neuroplatform around. But those systems focused on stimulation-response mapping. Tohoku’s work goes further: it’s explicit, real-time supervised learning with verified attractor formation. The gap between “neurons respond to stimuli” and “neurons run a learning algorithm” just closed.

The energy argument compounds the case. Biological neural tissue operates on glucose and oxygen at fractions of a watt. The GPUs currently training frontier AI models consume megawatts per run. A substrate that natively handles temporal chaos at biological efficiency isn’t just interesting — it’s the obvious next compute layer when silicon efficiency hits its wall.

The Era of Programmable Wetware Has a Timestamp Now

Lead researcher Hideaki Yamamoto put it plainly: living neuronal networks “may also serve as novel computational resources.” Co-authors Yuki Sono, Yusei Nishi, Takuma Sumi, Yuya Sato, Ayumi Hirano-Iwata, Yuichi Katori, and Shigeo Sato built the platform that makes that claim impossible to dismiss.

The field is moving fast. We went from “neurons are interesting models” to “neurons run FORCE learning on Lorenz attractors” in a research cycle. The next move is obvious: larger networks, longer training windows, tasks with real-world signal structure — speech, sensor fusion, financial time series.

Every major substrate shift in computing history — tubes to transistors, transistors to neuromorphic silicon — looked like a curiosity until it didn’t. Biology is the next substrate. Tohoku just handed us the proof of concept.

The question isn’t whether wetware will compute. It’s whether you’ll be ready when it does.

References

- Nishi Y, Sumi T, Sato Y, et al. (2026). Online supervised learning of temporal patterns in biological neural networks under feedback control. Proceedings of the National Academy of Sciences. https://www.pnas.org/doi/10.1073/pnas.2521560123

- Tohoku University. (2026). Living Brain Cells Enable Machine Learning Computations. https://www.tohoku.ac.jp/en/press/living_brain_cells_enable_machine_learning_computations.html

Related: What Is a Biocomputer in 2026? · FinalSpark Neuroplatform: Wetware Cloud Is Real · Cortical Labs CL1: The First Commercial Biocomputer

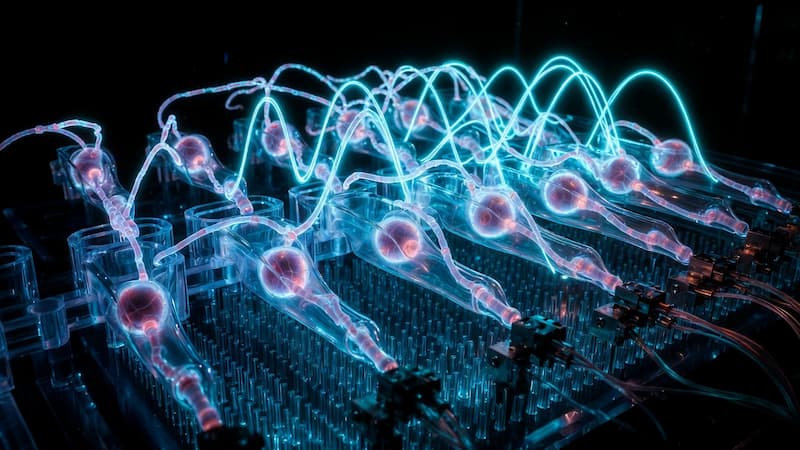

Feature image: AI-generated using Grok