The computing world is hitting a wall that no roadmap can paper over. Training a frontier AI model now consumes energy equivalent to powering hundreds of households for months. Meanwhile, sitting three pounds inside your skull, the human brain delivers flexible, context-aware intelligence on roughly 20 watts — the output of a dim light bulb. That gap isn’t a design flaw. It’s an indictment of how we’ve been building computers.

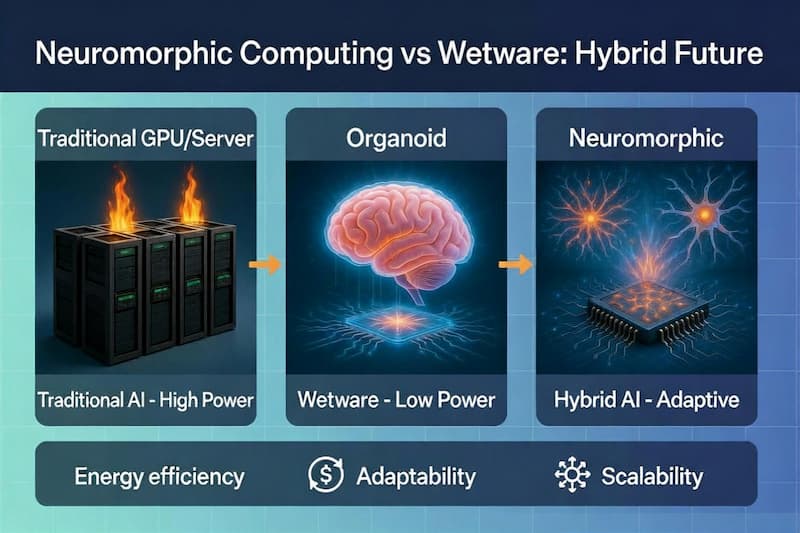

Two approaches have emerged to close it. Neuromorphic computing reimagines silicon to behave like neural tissue. Wetware — biological computing — skips the simulation entirely and uses actual living neurons as the substrate. They share the same inspiration: the brain. But they take fundamentally different routes, carry different trade-offs, and increasingly, they’re converging into hybrid systems that neither camp anticipated.

Understanding both isn’t just an academic exercise. It’s a map of where intelligence itself is heading.

Neuromorphic Hardware Is Already Solving Problems GPUs Can’t

The core insight behind neuromorphic design is deceptively simple: stop separating memory from processing. Every time a conventional chip shuttles data between a processor and RAM, it burns energy. The brain doesn’t work that way — neurons integrate memory and computation at the same synapse. Neuromorphic chips replicate this by building event-driven spiking neural networks directly into hardware.

In practice, this means components only “fire” when there’s meaningful change in the signal, staying idle the rest of the time. The energy savings are real. Intel’s Loihi 2 demonstrated this in 2025, when researchers at Sandia National Laboratories used it to solve partial differential equations — the mathematics underlying fluid dynamics and structural mechanics — with orders-of-magnitude improvements in energy consumption over GPU clusters running equivalent simulations. Intel’s Hala Point system, packing 1.15 billion artificial neurons, has become a benchmark platform for edge AI workloads where latency and power matter more than raw throughput.

What neuromorphic systems deliver today: reliable, manufacturable, deployable hardware that brings brain-like efficiency to robotics, sensory processing, and physics simulations. The limitation is equally clear — they approximate the brain. On-chip plasticity is real, but it’s a software-defined imitation of biological learning, not the thing itself.

Wetware Takes the Inspiration Literally

Cortical Labs’ CL1, released to market in early 2026, is the clearest proof that wetware has crossed from research curiosity to commercial reality. The device cultivates between 200,000 and 800,000 living human neurons on a silicon chip, encased in a closed life-support system that maintains viability indefinitely. Developers interact with it via Python. The neurons form dynamic networks in real time, adapting to inputs in ways that no silicon approximation currently replicates.

The CL1 is not a prototype. Cortical Labs has announced data center deployments in Melbourne and Singapore, where arrays of CL1 units will provide biological compute at infrastructure scale — a meaningful signal that the industry is treating this seriously.

FinalSpark’s Neuroplatform takes a different approach: cloud access to living neural cultures for research institutions that don’t want to maintain their own biological hardware. It’s the AWS model applied to neurons. Researchers at dozens of institutions are already using it to probe questions about learning, memory formation, and drug response that silicon simulations can’t adequately model.

What wetware delivers that silicon cannot: genuine biological plasticity, self-organization, and the capacity to generalize from minimal data. A neuron doesn’t need ten million labeled examples to recognize a pattern. It adapts. The challenges are real — cell longevity, ethical frameworks around consciousness, and interfacing standards are all unsolved. But none of these are insurmountable engineering problems.

The Energy Math Favors Biology — By a Lot

FinalSpark has published comparisons suggesting their Neuroplatform operates at up to 100,000× better energy efficiency than silicon GPUs for certain adaptive learning tasks. Even conservative estimates put biological neural tissue at a significant efficiency advantage for workloads requiring continuous adaptation rather than batch processing.

This matters because AI’s energy crisis is not a future problem. Data centers are already straining power grids. The projections for compute demand over the next decade assume silicon scaling that the laws of physics increasingly resist. Biological computing doesn’t solve every problem — it introduces its own infrastructure costs in temperature control, nutrient delivery, and biological maintenance. But for adaptive inference workloads, the thermodynamic case for neurons over transistors is getting harder to dismiss.

Neuromorphic hardware sits in an interesting middle position: better than conventional silicon, but constrained by the fact that it’s still silicon. The brain’s efficiency comes from electrochemical signaling at the synapse level, not from clever circuit design. Neuromorphic chips can approximate this. They cannot replicate it.

Traditional AI burns energy. Wetware runs lean. Hybrid systems aim to combine both strengths.

Traditional AI burns energy. Wetware runs lean. Hybrid systems aim to combine both strengths.

Neuromorphic Twins — The Hybrid That Changes Both Fields

The concept generating the most excitement in early 2026 research is the Neuromorphic Twin: a silicon-based digital replica that emulates, communicates with, and co-evolves alongside a living biological neural network in real time. A paper published in Nature Communications in February 2026 formalized the architecture — a bidirectional interface where the neuromorphic hardware reads biological signals, provides calibrated stimulation, and adapts its own model to match the organoid’s evolving state.

The immediate applications are clinical: personalized neuroprosthetics where the digital twin adapts to a patient’s specific neural architecture rather than operating on population averages. The longer-term implications are broader. A system where silicon and neurons co-evolve may exhibit capabilities that neither achieves independently — the precision and speed of hardware combined with the adaptive depth of biological learning.

Cortical Labs’ CL1 already embodies a primitive version of this architecture. The silicon chip provides structure, interfacing, and life support; the neurons provide the intelligence layer. Future iterations of this approach will blur the line between neuromorphic hardware and wetware until the distinction becomes a matter of degree rather than kind.

The Question Worth Sitting With

At BioComputer, we cover both fields because they’re converging, not competing. But it’s worth being precise about what each represents. Neuromorphic computing is engineering. It’s humans designing silicon to behave more like the brain — a legitimate and productive pursuit. Wetware is something closer to co-option: recruiting biology’s four-billion-year R&D investment and deploying it toward problems we’ve defined.

The difference isn’t just philosophical. It shapes what questions each approach can answer. Neuromorphic systems let you ask: how efficiently can we approximate neural computation? Wetware lets you ask: what does actual neural computation reveal about learning, memory, and adaptation that we haven’t thought to model yet?

The second question may be more valuable. Not because wetware is “better” in some absolute sense, but because the brain’s architecture encodes answers to problems we don’t yet know how to formalize. Living neurons surprise us. That’s not a bug in the research methodology. It’s the point.

The convergence of neuromorphic scaffolding with living neural tissue isn’t science fiction — it’s shipping hardware and published architecture in 2026. What comes next will depend less on whether we choose silicon or biology, and more on how honestly we grapple with what we’re still failing to understand about intelligence itself.

References

- Schuman, C. et al. (2025). Neuromorphic computing for partial differential equations. Nature Machine Intelligence. https://www.nature.com/articles/s42256-025-00001-0

- Intel Labs. (2025). Hala Point: A neuromorphic research system with 1.15 billion neurons. Intel Newsroom. https://newsroom.intel.com/news/intel-hala-point

- Cortical Labs. (2026). CL1 Biological Computer — Product Overview. https://corticallabs.com/cl1

- FinalSpark. (2025). Neuroplatform: Remote access to living neural cultures. https://finalspark.com/neuroplatform

- Yoo, J. et al. (2026). Neuromorphic Twins for adaptive neuroprosthetics. Nature Communications. https://www.nature.com/ncomms

- Kagan, B. et al. (2022). In vitro neurons learn and exhibit sentience when embodied in a simulated game world. Neuron. https://doi.org/10.1016/j.neuron.2022.09.001

Related: What Is a Biocomputer in 2026? · Cortical Labs CL1 Review · Organoid Intelligence: Beyond the Dish

Feature image: AI-generated using Grok.